RICE Score: A Balanced Prioritization Framework

Imagine you’re a product manager tasked with driving user engagement for your company. You’ve got a ton of ideas flying around, from developing new features to revamping your website.

But how do you know which ideas to prioritize? That’s where the RICE scoring model comes in.

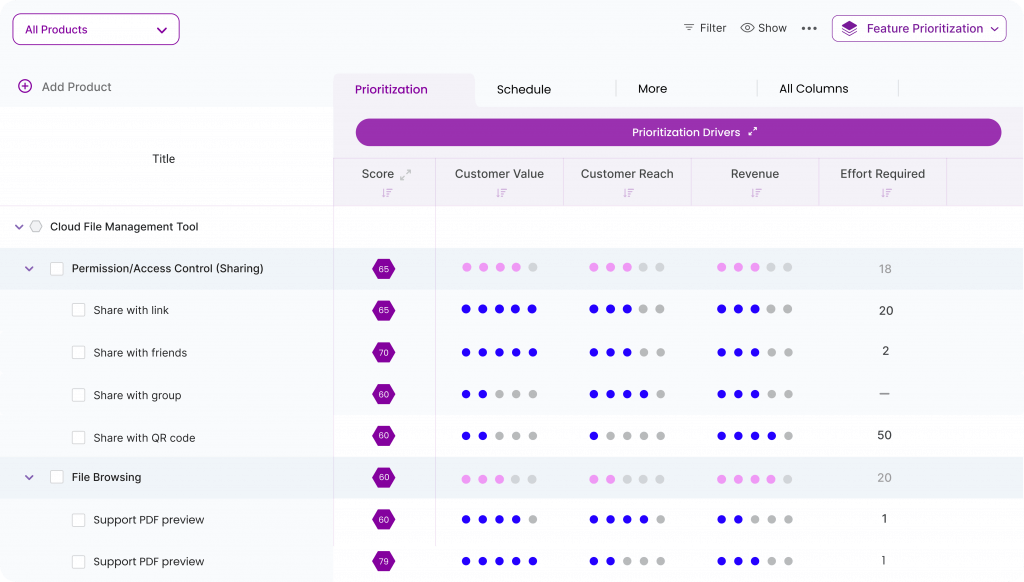

RICE is a powerful prioritization framework that helps you score potential initiatives based on four factors: reach, impact, confidence, and effort. By using RICE, you can make better-informed decisions, minimize personal biases, and defend your priorities to other stakeholders.

Think of the RICE framework as your secret weapon in the battle for product success. It’s the tool you need to sort through the noise and identify the initiatives that will truly move the needle.

Whether you’re a seasoned product pro or just starting out, the RICE scoring model is an essential part of your toolkit.

Chisel helps you sort your priorities using the RICE framework. Sign up for free today!

Are you ready to learn all about the RICE scoring model?

What Is a RICE Score?

RICE stands for Reach, Impact, Confidence, and Effort.

It is a simple and popular prioritization framework.

The RICE framework determines the importance of various features, ideas, and initiatives that people may have for a product.

A RICE score lets the PM quantify the specific importance and compare them to many others.

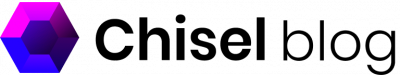

An ideal product management software like Chisel employs the RICE framework making the prioritization process a breeze.

The above image is the screenshot of Chisel’s Treeview. When you’re looking at a feature, you can review the four different categories that are used in Chisel to calculate priority. The different categories are Customer Value, Customer Reach, Revenue, and Effort. Clicking on any of these will display the corresponding bubble where you can adjust the value until it is aligned with your needs.

The formula for calculating the RICE score is as follows:

RICE= [(Reach x Impact x Confidence)/Effort]

Due to the nature of a product manager’s job, they have many different ideas they can work on at any moment.

RICE prioritization helps organize and ensure that the product manager knows what to work on next.

How RICE Scoring Model Works?

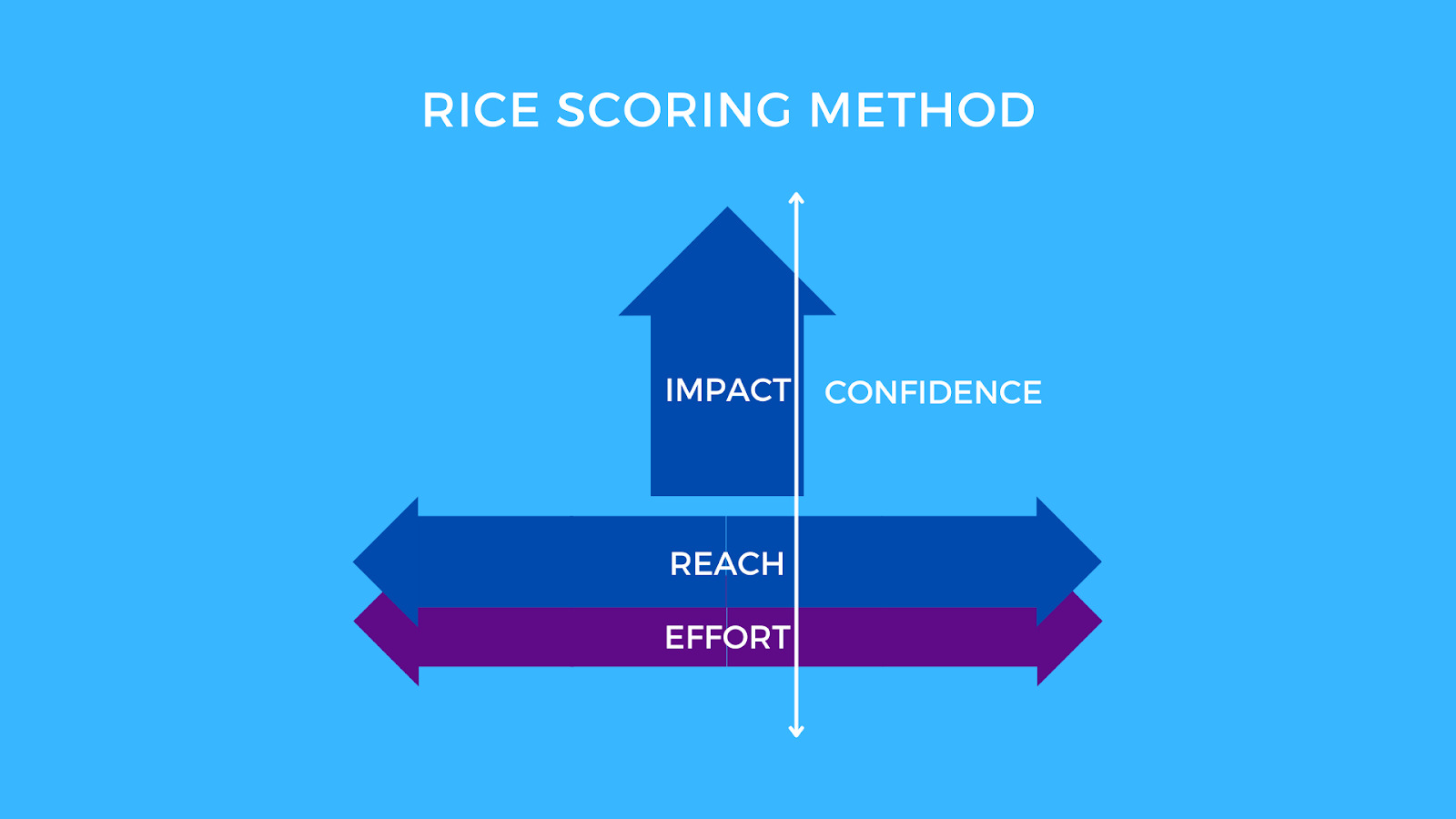

Reach:

The Reach score is the number of people you will impact by implementing a feature in a given timeframe.

One beneficial aspect of the RICE prioritization framework is defining the timeframe. Hence, you can decide how long it will take for the impact and what type of user you plan to reach.

You can quantify this via internal metrics.

You can obtain these metrics during product development and external surveys that your target audience answers.

An example of calculation reach is: Let’s say you are the product manager for a file transfer application.

Let’s say you wanted to calculate the reach score for a feature that tells people when they are close to running out of storage. For this, you should find out how many people are close to that storage cap in a given period (let’s say a day).

Then, consider how long you want the period (let’s say one month).

Suppose 50 people a day get close to reaching the storage cap, and your reach time is one month.

Then, the reach for the feature would be 50×30=1500 people or 1500.

Sometimes calculating the reach may be difficult if a product is very new and does not have many users.

Calculating this score is challenging if the internal tools aren’t accurate or unavailable. In such a case, trying and getting statistics from other companies in your competing field can come handy.

However, it isn’t perfect as those companies may serve target audiences not close to yours. Another way to get around this would be to try and combine the reach and impact scores into one combined score.

What matters the most is how much impact a feature will have on the revenue that it will generate.

Impact:

The impact score calculates your feature’s effect on your users.

In short, reach is about how many people, while the impact is how much it will affect a customer.

To understand the impact, think about how much implementing a particular goal will help your company. One common goal for most companies is, “How the feature could affect the likelihood to convert someone into a repeat, long-term customer.“

The best way to go about and standardize this impact is by providing a scale that rates features.

A typical scale is 3 for “massive impact,” 2 for “high impact,” 1 for “medium impact,” 0.5 for “low impact,” and 0.25 for “minimal.”

Without an excellent goal, the effectiveness of the impact score and RICE framework has decreased.

It is helpful to have strong communication with all teams and stakeholders.

That helps determine the goal for a given time so that prioritization can be more effective.

Confidence:

This number represents the certainty of the reach, impact score, and corresponding benefit.

Effectively, it aims to act as a fail-safe against reach and impact scores that are too high due to accidental bias in planning.

Let’s say you understand what the reach, impact, and effort scores are, yet there are gaps. In that case, you should add a confidence score to check the uncertainty in such a case.

Like the impact score, you calculate the confidence by providing a scale to measure the confidence level against.

A typical scale is 100% for high confidence, 80% for medium confidence, and 50% for low confidence.

Product managers must enhance the confidence score and rating accuracy whenever there is a low confidence score.

A low confidence score should be a last resort when no alternative exists. It shouldn’t be a default option when one is too lazy to seek more information.

Similarly, a high confidence score should reflect the product manager’s confidence in the feature’s success.

That is based on several data points, mockups, and designs of the actual feature.

Effort:

This score represents how long various teams will take to implement a feature. The effort score calculates the downside of implementation.

It contrasts with the other three RICE aspects that calculate a feature’s upside. The most common way to quantify efforts is the number of persons per month or “person-months“ it will take to complete the round-up.

Suppose it took seven people one week to work on the feature in the file transfer application example. Then, it would have an effort score of seven person-weeks. The standardizing effort allows people to compare how long various features and products will take.

However, it requires tight communication with various engineering and design teams. It ensures these ratings are as accurate as possible and may be hard to calculate at first glance.

Where Did the RICE Score Model Come From?

The RICE model was developed by Intercom – a popular messenger and customer communications platform.

Intercom had been using scoring systems for prioritizing different sets of ideas. However, they couldn’t find the one that best suited their needs.

After many failed attempts, they devised the RICE framework and the RICE prioritization formula to combine the four factors. The RICE score can give product managers and the team a bird’s view of the various project pipelines and priorities.

Why Use the RICE Framework for Prioritization?

As a product manager, deciding what to work on can be daunting. With limited resources and numerous priorities, making informed decisions is essential. That’s why many product managers and teams rely on the RICE framework. This prioritization tool is widely adopted.

Let us explore the benefits of the RICE framework.

- RICE framework reduces biases and prioritizes features with the most significant impact on the most important number of people.

- The framework measures confidence and seeks data and validation to ensure accurate decision-making.

- RICE framework encourages product management best practices, including discovery work, supporting decisions with data, focusing on impact, and customer centricity through reach.

- Discovery work involves gathering information to validate assumptions and prioritize impactful features.

- Supporting decisions with data means using data to make informed decisions.

- Focusing on impact means prioritizing features significantly impacting the product, business, and customers.

- Striving for customer centricity through reach means prioritizing features that benefit the most customers.

How to Prioritize Tasks With the RICE Framework?

Prioritizing tasks is vital after the team has decided the ideas, found solutions, and built the product roadmap.

But this journey of deciding what you should undertake from the roadmap can be a tedious task for a product manager.

You may ask why?

It is always possible to bring our unique personalities and ideologies to the table as an individual.

That’s good!

The problem arises when we push our ideas without thinking about the other possible solutions to reach the end goal.

Let us look at some of the points product managers can consider while prioritizing tasks using the RICE framework.

PM may bring biases and preconceived notions along with them unknowingly. There are two types of considerations that come up when prioritizing tasks:

A) Objective Consideration: Product managers may consider the company’s financial data, customer experience, and other measurable aspects while prioritizing tasks.

B) Subjective Consideration: The other aspect that product managers may consider while prioritizing tasks is subconscious beliefs. An individual’s strengths and weaknesses may back these.

The other biases could be the product manager’s beliefs about the company’s/project’s outcome, the team’s abilities, stakeholders’ beliefs, and others. Before prioritizing the new tasks, product managers need to consider if there are already some projects that the team could work on.

It’s easy to go ahead and prioritize the ideas you consider worthwhile.

But it’s essential to look at the other pool of ideas and the reach and impact they might have.

Another bias is not to sideline the tasks that may require more effort than the others on the roadmap. These are some preconceived notions that you should address while prioritizing the tasks.

And this job is better organized when you use the RICE prioritization method. It is even better to sign up for software for product managers and get the framework included in the package.

The RICE framework and the RICE score help product managers to view the tasks on the product roadmap with more discipline.

It helps to minimize biases and complete the projects smoothly.

Bonus: Top Prioritization Templates To Get Your Focus Just Right!

Pros and Cons of RICE Prioritization Method

Pros of the RICE Framework

Easier to grasp:

The RICE model is simple for non-technical stakeholders to understand. It’s a great way to introduce the concept of prioritization and tradeoffs in product development.

Based on the data:

You can use the RICE Prioritization model to input data for the product roadmap planning meetings.

It will clarify what you should offer next. You can use this as input data for roadmap planning meetings. With the RICE framework in mind, your team can establish clear success criteria for each project or feature.

Clarity will help you inform your priorities and decision-making for your team.

It is conducive when deciding on new features because you have a set of guidelines for how this will be measured going forward.

For example, You can measure “reach” by looking at how many new users sign in due to the new product launch/feature.

Or suppose you’re working with an existing product.

In that case, you can measure “impact” by looking at how your app usage changes among existing users. This information becomes incredibly helpful in objectively evaluating whether or not a feature is working.

Realistic view:

The RICE prioritization formula is helpful when planning out your product roadmap.

You must be realistic about what you can accomplish within a specific time frame and how many resources it will take. Otherwise, things might fall through the cracks or end up half-baked.

The RICE prioritization also helps teams understand their users’ goals and impact when using a new product or feature.

It provides a checklist for evaluating priorities and making decisions around product development to avoid expensive mistakes.

User friendly:

The RICE framework is also helpful in making decisions about optimizing features and identifying new opportunities. The RICE prioritization method keeps track of feature requests from customers and other user feedback.

In short, user experience is an essential factor in the RICE model.

SMART goals:

Setting SMART goals improves efficiency in any working model.

And this is true even when using the RICE prioritization method.

When using the RICE model to arrive at the single rice score, it is advisable to arrange the product metrics in a SMART way.

SMART represents specific, measurable, attainable, relevant, and timely. For example, one of the factors in the RICE model is REACH – based on time.

Bonus: OKR vs. Smart Goals – What’s The Difference?

Cons of the RICE Framework

Comprehensiveness:

The RICE prioritization formula is simple to understand. However, it is designed for complex tasks/projects.

Hence, using the rice score to decide on the smaller and simpler tasks may complicate things.

Inaccurate estimations:

The rice score and its estimates may not always be accurate. The RICE prioritization method quantifies the features from the lists using the confidence factor.

Dependencies not considered:

The RICE score does not include the dependencies of the product, product managers, and teams. Often they have to de-prioritize tasks that may have a higher RICE score after putting them through the RICE framework.

Labor-intensive:

Consider the feature outcomes before moving ahead. Product managers should analyze a particular feature against all four factors of the RICE framework, which can be tedious.

Unavailability of data:

Measuring the reach and impact factors of the RICE model is essential. But not all the products or features may have the data available for such metrics to reach a higher RICE score.

The suggestion is to reduce your confidence score accordingly, dropping the overall RICE score. However, due to the low scoring in the RICE framework, the feature may never be released.

Conclusion

A prioritization process such as the RICE framework works wonders.It keeps the product managers and teams on track and makes informed decisions.

You can achieve many more benefits once you familiarize yourself with the RICE model. The RICE prioritization method may have its pros and cons.

Yet, Intercom suggests not using this scoring model as a hard and fast rule. You can always switch when required and use it as per your company’s needs.

You may also be interested in:

- Popular Prioritization Frameworks Product Managers Must Know

- 2×2 Prioritization Matrix for Professional Teams

- Prioritization 101: Implementing Customer Ideas on Your Roadmap

- How to Prioritize Product Roadmap With Matrix?

- Gantt Chart Dependencies: Understand and Manage

- 9 Best Team Collaboration Software in 2022